Master data governance defines how organisations manage the accuracy, ownership, and quality of their most critical data. This guide covers the framework, principles, process, and common failure modes that leadership teams should understand.

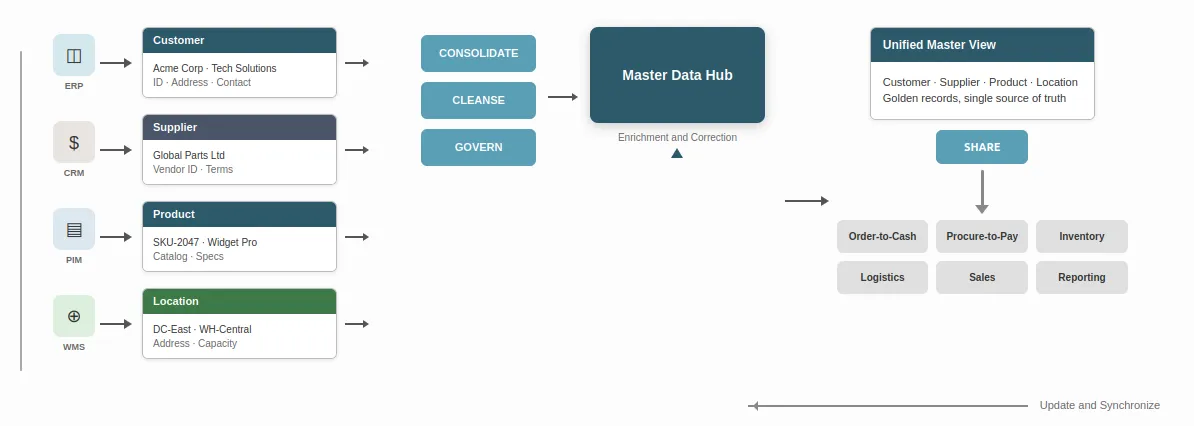

Master data governance is the discipline of defining who owns critical data, how its quality is maintained, and how decisions about that data are made across the organisation. It applies to the core reference data that multiple systems and teams depend on — customer records, supplier details, product catalogues, location codes, and employee data.

Without master data governance, organisations operate on data that looks consistent but is not. Different systems hold different versions of the same customer. Product codes do not match between warehouse and finance. Supplier records are duplicated, outdated, or incomplete. Every process that depends on this data inherits the problem.

This guide covers the essentials: what master data is, how master data governance differs from master data management, what governance involves, and where it most commonly fails. It is written for leadership teams evaluating their data governance approach — not for tool selection or implementation planning.

Master data describes the core building blocks upon which your business operates — the people, places, and things that interact in your processes and transactions. It is the curated reference information that multiple systems and teams depend on.

Common master data domains include:

Master data is distinct from transactional data. Transactions record events — an order placed, a payment made, a shipment delivered. Master data describes the entities involved in those events. When master data is inconsistent, every transaction that references it inherits the problem. Getting master data right from the beginning matters because fixing it later is far more expensive.

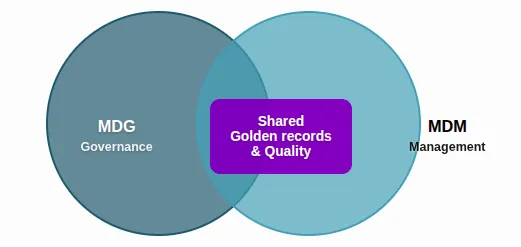

Master data governance (MDG) and master data management (MDM) are related but different.

Master Data Governance refers to the policies, procedures, standards, and accountability structures that define how master data is created, maintained, and used. It answers questions of ownership, definition, quality standards, and compliance. It is primarily organisational — roles, rules, and decision rights.

Master Data Management refers to the technology and processes for creating, storing, and distributing authoritative master data across the organisation. MDM systems provide methods for data collection, de-duplication, linking, and distribution. They are the tools that implement governance.

MDM supports MDG. Governance defines the rules; MDM enforces them at scale. You cannot implement effective MDM without clear governance. Equally, governance without some form of controlled data management — whether through dedicated MDM tools or disciplined operational processes — remains theoretical. The two are mutually dependent.

At its simplest, data governance is the set of decisions an organisation makes about how data is managed, who is responsible for it, and what standards apply. Master data governance narrows that scope to the most consequential data — the reference data that underpins transactions, reporting, and analytics across the business.

The relationship between data governance and data quality is direct. When governance is absent, quality degrades. Records accumulate errors. Definitions drift between teams. Reports conflict. Decisions are delayed because no one trusts the numbers.

The cost of poor data compounds over time. Research from Sirius Decisions summarises this as the 1-10-100 rule: it costs roughly $1 to verify data as it is entered; $10 to cleanse and de-duplicate each error after capture; and $100 per error to operate a system built on bad data. The earlier governance is applied in the data lifecycle, the lower the cost. Reactive correction is expensive. Prevention at capture is economical.

Master data governance exists to prevent this. It does so not through technology, but through accountability, standards, and process.

Effective master data governance rests on a small number of principles. These are not abstract ideals. They are practical commitments that determine whether governance works or becomes bureaucratic overhead.

Ownership must be explicit. Every data domain — customer, supplier, product, location — needs a named owner. Not a system. Not a department. A person who is accountable for accuracy, completeness, and timeliness.

Definitions must be agreed. If “customer” means something different in sales, finance, and operations, no amount of technology will produce a single customer view. Master data governance requires that core terms are defined once and enforced consistently.

Quality is governed at capture, not in reports. Errors found in monthly reporting were created weeks or months earlier. Governance that waits for reporting to surface problems is always reactive. Controls must sit where data is created.

Authority must be clear. When a definition is challenged or a standard is disputed, someone must have authority to decide. Without this, governance stalls in consensus-seeking. Escalation paths need to be defined and respected.

Standards must be proportionate. Not every data field requires the same level of governance. Critical fields — those that drive financial reporting, regulatory compliance, or customer-facing decisions — deserve more attention than supporting attributes. Proportionality prevents governance from becoming unmanageable.

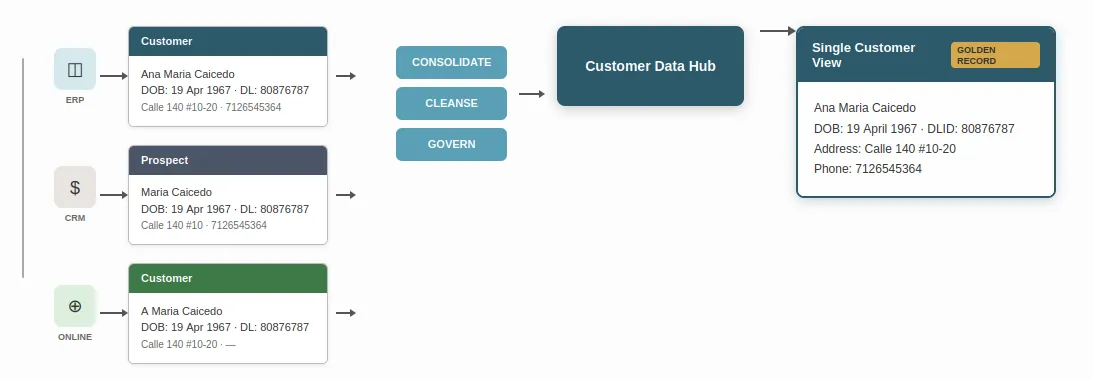

A practical example of what master data governance achieves is the single customer view — a consolidated, de-duplicated record that uniquely identifies each customer and their attributes across the business.

In many organisations, customer data resides in separate systems: CRM, ERP, billing, marketing, and support. Each system may hold different versions of the same customer — different spellings, addresses, or contact details. Duplicate records accumulate. No one can say which version is correct. Commercial teams cannot accurately identify total spend per customer. Marketing cannot target segments reliably. Service cannot see the full history.

Master data governance, supported by appropriate data management processes, enables de-duplication and merging of these records into a single, trusted view — often called a golden record. That view can then be shared across channels. Decisions are based on one version of the truth.

Achieving this requires governance decisions: who owns the customer domain, how “customer” is defined, what attributes are mandatory, how conflicts between source systems are resolved, and who has authority to approve merged records. Without these, technology alone cannot produce a reliable single view. With them, the organisation gains confidence in customer-facing decisions — from sales to support to compliance.

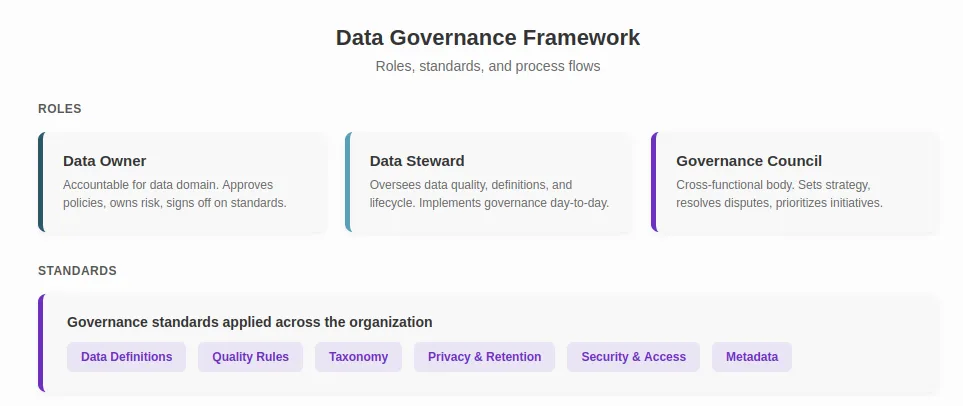

A data governance framework is the structure within which governance operates. It defines roles, responsibilities, standards, and the processes that connect them.

A practical framework includes:

These roles do not need to be full-time positions. In most organisations, they are responsibilities held alongside existing roles. What matters is that they are formally assigned, not assumed.

The framework must define what good data looks like. This includes naming conventions, mandatory fields, validation rules, and de-duplication criteria. These standards should be documented, accessible, and enforceable — not buried in policy documents that no one reads.

Any framework must address two ongoing activities. First, how changes to standards are proposed, assessed, and approved. Second, how compliance with existing standards is measured and enforced.

Without change management, standards erode over time. Without compliance monitoring, governance exists on paper but not in practice.

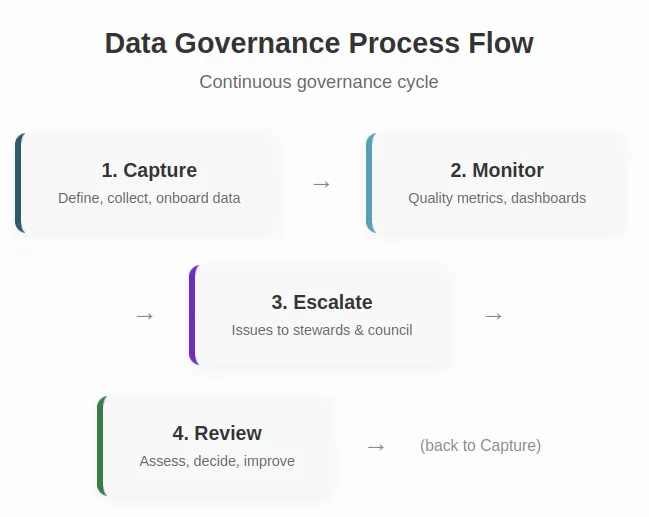

A data governance plan describes how governance will be established. The data governance process describes how it operates day-to-day.

In practice, the process involves:

The most effective data governance processes are embedded in operational workflows. They are not separate activities performed by a governance team in isolation. When governance is disconnected from operations, compliance drops and resentment builds.

Across industries, certain patterns consistently distinguish governance that works from governance that does not.

Start with high-impact data domains. Do not attempt to govern everything at once. Begin with the data that creates the most pain — typically customer, supplier, or product master data. Demonstrate value before expanding scope.

Secure executive sponsorship. Governance without leadership support is advisory at best. When data owners lack authority, standards are treated as suggestions. Executive sponsorship gives governance the weight it needs to change behaviour.

Measure what matters. Define a small number of data quality metrics and track them consistently. Percentage of duplicate records. Completeness of mandatory fields. Time to resolve data issues. These metrics make governance visible and defensible.

Separate governance from technology. Data governance solutions — tools for cataloguing, lineage, quality monitoring — can support governance. They cannot replace it. Organisations that purchase governance tools without first defining roles, standards, and processes find that the tools are underused or misapplied.

Treat governance as ongoing, not as a project. Governance is an operating discipline. It does not have a completion date. Organisations that treat it as a one-off initiative find that standards decay within months of the project closing.

The failure modes are predictable. Understanding them is as important as understanding best practice.

Governance is delegated entirely to IT. When governance sits exclusively within technology teams, it becomes disconnected from business reality. Data owners in the business disengage. Standards reflect system constraints rather than business needs.

No authority behind decisions. Governance councils that recommend but cannot enforce produce standards that are ignored under operational pressure. Authority must be real, not notional.

Complexity kills adoption. Frameworks with dozens of roles, hundreds of policies, and elaborate approval workflows collapse under their own weight. Practical governance is proportionate to organisational capacity.

Maturity is measured but not acted on. Data governance maturity models — including those referenced by analyst firms such as Gartner — provide a useful diagnostic lens. They describe levels of capability from initial and reactive through to optimised and predictive. However, measuring maturity without acting on findings produces assessments, not improvement. Maturity models are useful when they drive specific, prioritised actions.

Master data governance does not operate in isolation. It connects directly to enterprise data governance — the broader discipline that covers all data across the organisation, not just master data.

A coherent data governance strategy positions master data governance within this wider context. It clarifies how governance of reference data relates to governance of transactional data, analytics data, and regulatory data. It ensures that standards, roles, and processes are consistent rather than duplicated across domains.

For organisations pursuing an enterprise data framework, master data governance is foundational. Strategy defines intent. Governance makes that intent enforceable. Without governance, strategy produces aspirations that cannot be sustained.

Master data governance is not an end in itself. It exists to support confident decision-making. When customer data is trusted, commercial decisions are better informed. When supplier data is clean, procurement negotiations are grounded in fact. When product data is consistent, reporting reflects reality.

For organisations considering AI readiness or advanced analytics, master data governance determines whether model inputs are reliable. Analytics built on ungoverned master data inherit every inconsistency in the source.

Independent enterprise data advisory helps leadership teams evaluate where governance stands today, what is proportionate to their risk profile, and which actions will deliver the most value. This work sits upstream of tools and platforms — it creates the conditions under which technology investments succeed.

For data governance examples drawn from real-world advisory work, see Logistics governance examples or the Failed Data Automation Initiative case study.